Key Considerations When Designing Privacy Education Materials

This report summarizes research-informed considerations that have supported Meta’s approach to developing privacy guides and can assist others in creating effective privacy education materials.

Obinna Ugwu ,

Alice Shan ,

Jingjing Jiang ,

Archana Vaidyanathan

Building privacy efficacy may earn trust and unlock use of AR/MR products

Product experiences that address people’s perceived privacy problems can build “privacy efficacy,” or users’ confidence in privacy. Across multiple studies, we found that respondents who had higher privacy efficacy were more likely to trust and use Augmented Reality (AR) smart glasses and Mixed Reality (MR) headsets.

We built a Trust Framework to help product developers understand how proactively designing for privacy may earn people’s trust and facilitate their use of AR/MR products.

Meenakshi Menon, Ph.D, Stacy Blasiola, Ph.D, Chelsea Cormier McSwiggin, Ph.D, Fernanda Herrera, Ph.D, Kasey Jones, Sarah Pearman, Jocelyn Rosenberg, Ph.D, Danny Ullman, Ph.D & Breian Witts

Read MoreBoosting privacy confidence with context screens

Arezoo (Auriana) Talbott UX Researcher, Meta

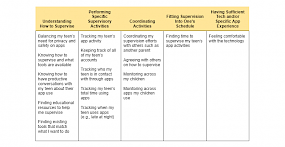

Read MoreHelping parents and guardians support their teens online

Kathleen Kremer, Ph.D UX Researcher, Meta

Read MoreHow do teens learn about digital privacy, and what support do they need?

When people begin using apps and other digital services in their teenage years, how do they learn about privacy? And what can others do to help them acquire the knowledge and skills they need to have a safe and healthy relationship with digital products?

Dan Busso ,

Denise Sauerteig UX Researcher, Meta

Evidence that education can build users confidence about their privacy on Messenger

Can apps help people feel more confident about their privacy by providing reminders of the privacy controls that they offer?

Meenakshi Menon, Ph.D Quantitative UX Researcher, Meta

Read MoreExploring privacy concerns and expectations for enterprise products

Privacy is a key driver of trust and adoption when businesses consider enterprise tools (software and hardware built for the needs of a business or organization). Yet, little research exists about the privacy needs that decision makers keep in mind when they’re evaluating enterprise software or hardware. Because identifying and understanding these needs could help enterprise developers create more useful tools for businesses, we conducted research to advance this topic.

Carmen Lefevre-Lewis, Ph.D UX Research Manager, Meta

Read MorePrivacy news about one brand can create a “spillover effect” for other brands

This research was motivated by a simple but intriguing question:

When someone reads a news article about the privacy practices of one app, does their reaction “spillover” and impact how they think about other, unrelated apps?

We were inspired to ask this question based on two past research insights.

Justin Hepler, Ph.D Quantitative UX Researcher, Meta,

Kenneth Nguyen, Ph.D Quantitative UX Researcher, Meta

Self-efficacy and privacy concerns predict reported use of privacy controls on Messenger

According to Protection Motivation Theory, people are motivated to take protective actions against perceived threats when they believe they can successfully address those threats. This led us to hypothesize that Messenger users may be most likely to use privacy controls when they have a privacy concern and also have high self-efficacy. We surveyed Messenger users across eight countries to test this hypothesis, and we found that respondents were most likely to report using Messenger’s end-to-end encryption and app lock controls if they had relevant privacy concerns and high self-efficacy for using Messenger’s privacy controls.

Meenakshi Menon, Ph.D Quantitative UX Researcher, Meta

Read MoreHow can companies help people understand privacy-enhancing technologies like on-device learning?

Privacy-enhancing technologies such as “on-device learning” can protect people’s privacy but can be complex and challenging to understand. We interviewed privacy experts and non-experts to learn how we might better communicate about these kinds of technologies, with a focus on on-device learning.

Lauren Kaplan, Ph.D UX Researcher, Meta

Read MoreDigital literacy insights can help improve privacy experiences

“Digital literacy” refers to how well someone is able to understand, manage, and interact with information through digital technologies (Law et al., 2018). Perhaps unsurprisingly, digital literacy has important relationships with digital privacy (Büchi et al., 2017). For example, people with low digital literacy tend to have fewer privacy concerns and tend to use privacy features less frequently relative to people with high digital literacy (e.g., Baruh et al., 2017; Brough & Martin, 2020; Bartsch & Dienlin, 2016; Levin & Redmiles, 2021). Further, when people with low digital literacy do use privacy settings, they often lack confidence in their ability to use them effectively (Hargittai, 2010).

Nadine Levin, Ph.D UX Researcher, Meta,

Justin Hepler, Ph.D Quantitative UX Researcher, Meta

How to make privacy settings easier to find using better names and organization

For people to have positive privacy experiences when using an app, companies not only need to include the right privacy settings, they also need to ensure those settings are easy to find. At Facebook, we hypothesized that we might have an opportunity to make our settings easier to find for two reasons. First, over the past several years, the Facebook app has evolved to include many new features, which has greatly expanded the number and types of privacy settings that exist in the app’s settings menu. Second, consumers’ expectations for which privacy settings exist in Facebook, what they’re called, and how to find them may have evolved over time, not only based on their use of Facebook itself but also based on their use of other products that may design privacy settings differently.

Denise Sauerteig UX Researcher, Meta,

Sarah Kling UX Researcher, Meta

PB&J: A new way to measure privacy concerns

Traditional ways of measuring users’ privacy concerns can produce ambiguous results that are difficult to take action on in applied settings. The Privacy Beliefs and Judgments (PB&J) framework is a new approach to measuring privacy concerns that addresses these limitations. It’s flexible and can be adapted to measure privacy concerns for a variety of topics and products.

Justin Hepler, Ph.D Quantitative UX Researcher, Meta

Read MoreResearch on parental involvement can help guide the privacy design of kids apps

- As kids grow, they develop an increasing need for autonomy and privacy. Yet parents have a continual need to stay informed about their kids' lives and social interactions.

- How should apps designed for kids balance privacy and parental involvement needs to deliver the right kinds of experiences to kids and their parents? In this article, I discuss research on parental involvement that informed the approach used to design privacy-related features on the Messenger Kids app.

Meenakshi Menon, Ph.D Quantitative UX Researcher, Meta

Read MorePrivacy concerns are similar across different apps

We conducted a survey to measure privacy concerns across a range of popular apps and topics. We discuss the implications for how companies might try to address users’ privacy concerns for their products in light of these results.

Justin Hepler, Ph.D Quantitative UX Researcher, Meta,

Maryhope Rutherford, Ph.D UX Researcher, Meta

Users’ top-of-mind privacy concerns

Privacy is an ambiguous term that can refer to many things. To effectively address users’ privacy concerns, companies must know what specific topics are concerning to users.

Justin Hepler, Ph.D Quantitative UX Researcher, Meta,

Stacy Blasiola, Ph.D UX Researcher, Meta