How might we...

...Uncover the layers of machine learning & provide consent within a group scenario

By reducing the speed and increasing the transparency of machine processes, Friendlee aims to make the often hidden process more visible and controllable to the user.

Friendlee is a social app which allows people to organise their different friendship groups and share photos, videos, messages and manage events within each group.

In order to provide the service, Friendlee is powered by some of the following data:

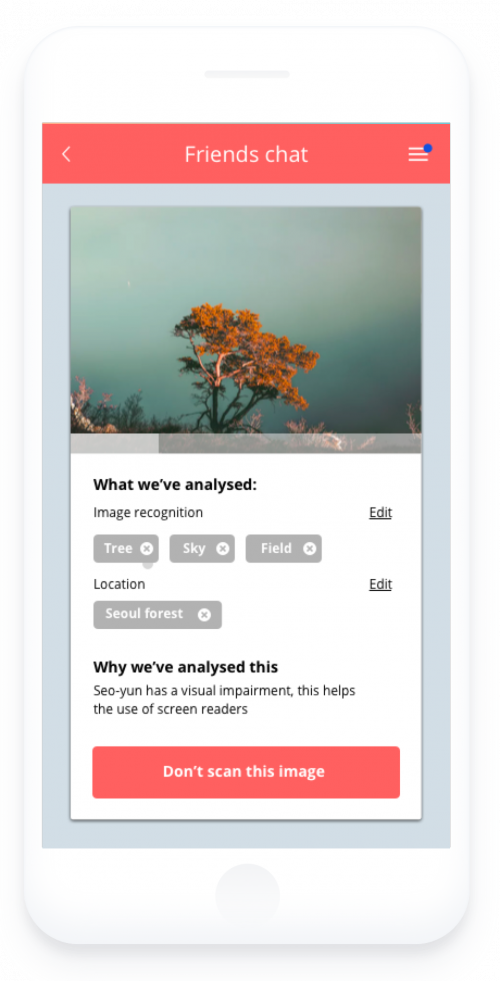

A problem for some people is understanding the reasons why machine learning or algorithms might be monitoring their content or conversations. Those reasons aren’t always negative, for example analysing images to provide text descriptions for the visually impaired. This prototype explores those processes and aims to give more transparency around how they work within the context of group messaging.

How might we...

...Uncover the layers of machine learning & provide consent within a group scenario

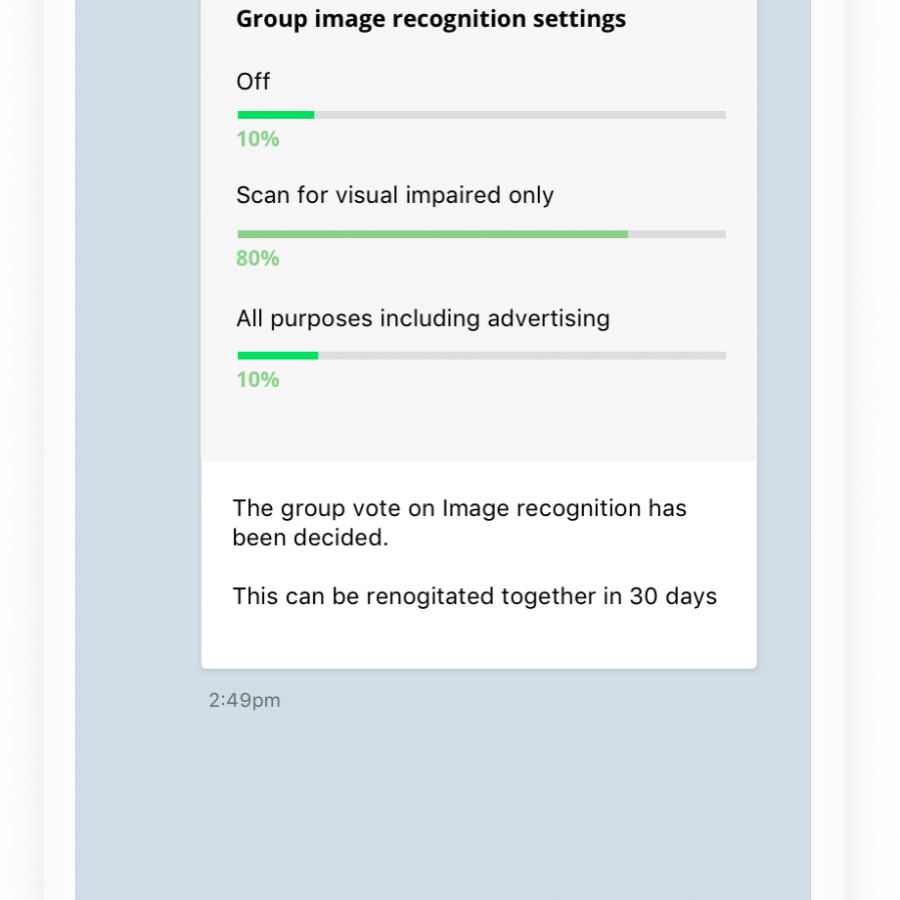

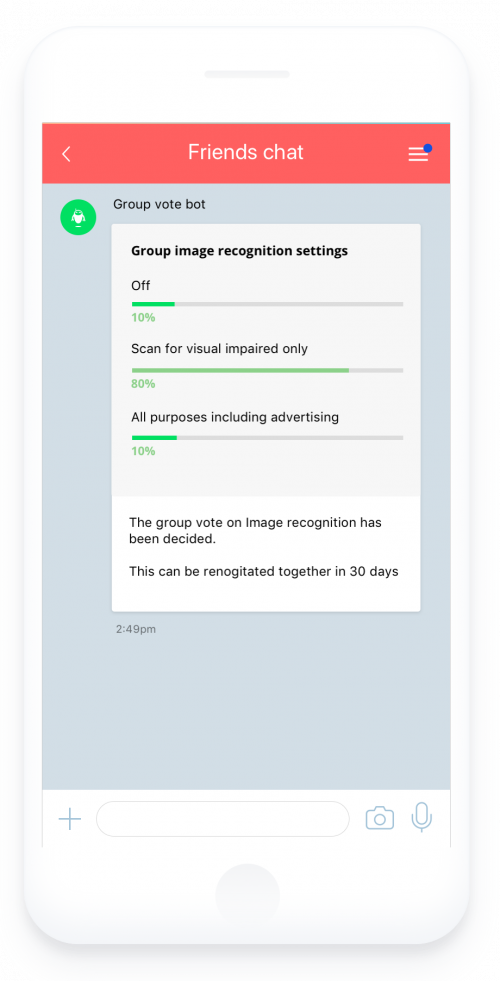

How could consent work within a group scenario? What if you were bound to the groups decisions on privacy settings, would this democratic approach make you feel safer and more informed or less in control?

In this prototype the idea of voting on privacy settings was introduced. Before joining a group chat, a user is shown the groups privacy preferences that have been decided upon and the user is asked to accept or reject the groups privacy preferences.

Both buttons are given the same prominence in order for people not to feel that they are being swayed either way.

Instead of striving for faster and quicker, what if interactions were slowed down deliberately to then peel away the layers to give more transparency and control to people? This could also allow time for people to interact with this mechanism, change it or even stop it.

This app demonstrates an image scanning process, that a person can view to see what and why it's being analysed. By visibly and artificially slowing down a process that is normally executed immediately and out of our reach, the app sets up a window of time during which – if they wish– people can stop the process and avoid seeing their photo analyzed.

While people are still given upfront choice to accept or deny photo processing, such a feature allows nuance over one's consent while providing granular control on privacy. The design allows for great transparency and informs people about the kind of data inferred from the content. Once an image has been analysed, people can access the tags that were attributed to it and edit them if they do not fit. This will be especially useful to provide a better experience for the visual impaired. Granular interactions allow people to make data purposes more tangible and strengthens the value exchange between an AI, one user and the community.

How might we build on Friendlee’s ideas to: